Modulate is creating a different kind of voice-based technology software what they call “voice skins.” The software allows anyone to change their voice to match a celebrity or cartoon character in an instant. The Cambridge-based startup recently closed a successful seed round where $2M was raised.

We spoke with Modulate’s Co-Founder and CEO Mike Pappas to learn more about their voice skins and how anyone could sound like President Obama through their software. We also touched upon how they are combating not-so-ideal use cases with people impersonating others for unscrupulous reasons.

Colin Barry [CB]: Let’s start at the beginning. How did you and the rest of the team at Modulate come together?

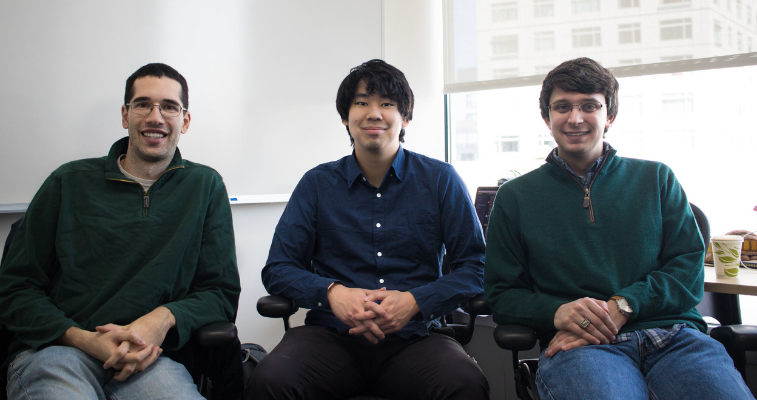

Mike Pappas [MP]: Carter Huffman and I first met as undergraduates at MIT when I came across him working on a physics problem at a whiteboard. We hit it off and quickly became friends and began collaborating on projects, both for and outside of class. After we graduated, we kept in touch and continued working on projects together. When Carter first hit on the idea for voice skins and realized he might know how to make it work, we immediately connected and began brainstorming how to develop that technology into a successful company. More recently, we've enlisted Terry Chen, our VP of Audio. Terry is an old friend of mine with over a decade of audio engineering experience. He was thrilled when we told him about the concept for Modulate, and we jumped at the chance to add his audio expertise to the team.

CB: Why get involved with voice technology space? Does anyone on the team have prior experience with it?

MP: Modulate isn't voice recognition software - our voice skins actually have no idea what it is that you're saying. (This allows them to work on nearly any language without needing any additional training!) Instead, we effectively swap out your vocal cords - changing how you sound, but not the content or style of your speech.

As for how we started working on voice skins, that comes from Carter. He spent some time at NASA JPL as a machine learning scientist, working mostly on problems involving images - for example, object detection for a spacecraft to avoid collisions. While there, he read about new research into something called visual style transfer, which applies the style of one image to another - for instance, rendering your family photo to the style of Picasso. He was inspired to ask the question of what would happen if you tried audio style transfer instead - and immediately realized what an enormous value this would be. Thanks to his machine learning experience and his physics background - which focused on signal processing techniques similar to those involved in manipulating audio - he was extremely well placed to be the first to crack this problem.

CB: As someone who enjoys a good impersonation for a comedy skit, I’m curious to know how the technology works. How can I go from sounding like me to sounding like a former President?

MP: When comedians want to impersonate, say, Barack Obama, they don't need to hear him say every single word first - they can listen to only a few short snippets in order to learn what his voice sounds like.

Our technology works the same way. Based on a few short clips of someone's speech, the algorithm learns what kind of voice they have, and stores that information. We then use something called a generative adversarial network (GAN) to actually convert your speech. The GAN consists of a network that tries to make your speech sound more like the target voice, and a second network that, knowing what the target voice sounds like, provides feedback about whether it sounds good enough. Eventually, this feedback loop tops off, with the first network able to convincingly make you sound like the target voice, no matter what words you're saying.

CB: How long was the development process on the technology and what kinds of tools were used?

MP: Carter first began researching voice skins in late 2015. It took nearly two years of experimentation before he landed on a system that was able to convert voices realistically and quickly. Of course, the system is always improving - as more people use it, it continues to learn to sound cleaner and more emotive.

CB: You and your team probably get this question a lot; What if someone uses Modulate’s technology for unscrupulous reasons?

MP: We've certainly thought deeply about this problem! We wanted to make sure to take concrete steps to keep voice skins as a positive technology, so we built in safeguards from the ground up. On the technical side, we watermark all of our audio, so that automated systems could detect that it's synthetic. We're also not allowing just anyone to use any voice - we're restricting voices of real people or characters to be purchased by those who own the relevant rights.

CB: Switching over to something a little more positive...what would be the typical use case be?

MP: Imagine playing a character in an online game - say, Overwatch, as the character Genji - with a distinct appearance and voice. As you engage in voice chat with your friends, you might want your own voice to match that character's, in order to add more depth to the experience.

Many other players have expressed interest in using voice skins to design new, unique voices for their online character - or even simply to mask their real voice so they're more comfortable chatting online. Really, the use cases vary as widely

as those for voice chat itself - in every application, there are reasons to want the freedom to design how you'd sound!

CB: What was the inspiration behind the name?

MP: We had three goals for our company name. We wanted it to capture the essence of transformation/customization that voice skins deliver. We wanted it to be simple and clean. And we wanted to stand out from other startups, which to us meant using a properly spelled, real word in our name instead of the many alternatives. (Bring it, Google!)

Modulate hit on all three, and we loved that we could use it to describe what our technology was doing more concretely as well, so it quickly stuck.

CB: Any other additional comments you’d like to make?

MP: We're hiring! We're particularly focused on engineers who can help us continue improving our technology and develop integrations with other voice platforms, but of course are always interested in connecting with anyone that shares our passion for voice skins! See more info at modulate.ai/careers or feel free to reach out at [email protected]!

Colin Barry is the Content Manager to VentureFizz. Follow him on Twitter @ColinKrash.

Images courtesy of Modulate